AI Pioneer Katie Parrott Integrates Human-AI Pairing in Codex-Native Apps

Serge Bulaev

Katie Parrott's essays suggest that choosing when to work closely with AI and when to let it work alone is important. She writes that some tasks, like bug triage, can be handed off to AI, but others, like writing emails or policies, may need humans to work together with the AI. Parrott says the real skill is learning to pick the right way to work - either side by side or by giving tasks away. Early research suggests that people working with AI may do better work, but many AI projects still fail because of problems with teamwork, not technology. She explains that knowing how to switch between modes might become a key skill for the future.

In the evolving landscape of product design, AI pioneer Katie Parrott is igniting a crucial debate around human-AI pairing. Her recent essays argue that while full delegation to AI is powerful, many tasks gain significant value from side-by-side collaboration between humans and models, a concept central to new Codex-native apps.

Parrott's insights are reshaping how developers approach interface design. The key, she suggests, lies in a new "meta-skill": learning to strategically decide whether to delegate a task to an autonomous AI agent or to engage in an iterative partnership on a shared digital canvas. This choice between modes is critical for unlocking AI's full potential.

Expanding the "Allocation Economy" for a New AI Reality

Parrott's theory evolves from her earlier allocation economy thesis, where she advised treating AI models like direct reports and budgeting attention accordingly. She now argues this framework only covers half the picture, as many complex tasks like policy drafting or creative work require direct human-AI collaboration within Codex-native apps.

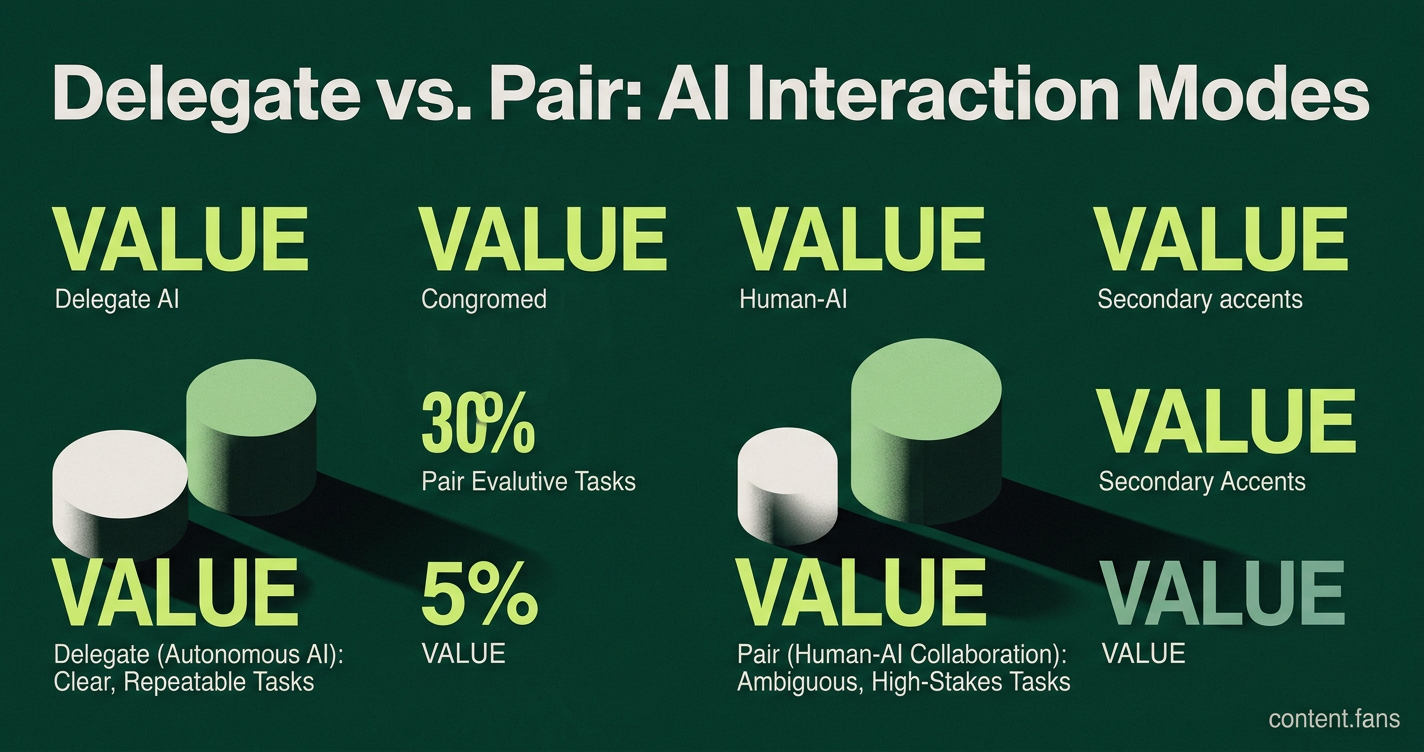

The core question is choosing the right interaction model for each task. Parrott's framework advises delegating clear, repeatable processes to autonomous AI agents. For ambiguous, high-stakes tasks requiring judgment or creativity, she recommends a collaborative "pairing" mode where the human remains in the loop for iteration and review.

Deciding When to Delegate vs. When to Pair

A task's predictability is the primary factor in choosing a mode. Clear, rule-based work like bug triage or log parsing is ideal for autonomous AI delegation. In contrast, ambiguous tasks requiring nuance, such as writing executive emails or brand copy, demand close-in pairing where human judgment guides the AI's output.

- Delegate (Hand-off): Bug triage, expense categorization, routine translations.

- Pair (Stay-close): Executive emails, policy memos, brand copy revisions.

Best Practices for a Mixed-Mode AI Workflow

- Define Rules First: Codify process rules and specifications before prompting to ensure clarity. Teams can create this crucial documentation internally.

- Triage Tasks at Intake: Immediately classify work based on whether it requires human auditability or can run autonomously.

- Maintain Action Logs: Use tools with tracked changes or timestamped action feeds to ensure every AI action is visible, auditable, and reversible.

- Budget Human Attention: Implement dashboards to monitor agent status and prevent "out of sight, out of mind" failures, treating oversight as a finite resource.

Industry Data Underscores the Importance of Pairing

Recent research highlights the dual nature of AI integration. A joint MIT, Harvard, and Boston Consulting Group study found that professionals using AI tools improved their output quality by 40%. However, industry reports suggest that a significant portion of multi-agent AI systems face challenges in production, with coordination issues - not model flaws - causing many of these failures. This data suggests that while AI boosts individual performance, successful deployment at scale requires robust collaboration frameworks to prevent breakdowns.

The "Meta-Skill" of Mode Selection as a Career Imperative

Parrott argues that choosing the correct AI interaction mode is the next essential workplace literacy. As workflows shift from "thinking by doing" to "reviewing and choosing from outputs," the ability to classify tasks, write clear specifications, and audit AI results becomes paramount. This trend is already impacting hiring, with companies reportedly exploring ways to integrate AI utilization into employee performance evaluations.

What are the two dominant AI work modes Katie Parrott identifies?

Parrott frames today's AI usage as split between hand-off delegation and stay-close pairing.

- Delegation is letting an agent run autonomously - imagine a bot triaging bugs while you sleep.

- Pairing is side-by-side work inside the same window - you draft an email and the model edits with you line-by-line.

Both are essential, but each demands a completely different product surface. The allocation-economy thesis was only half-right because it treated every task as delegation; Parrott shows the other half wants human-AI collaboration.

How should product teams decide which mode to support?

She offers a concrete checklist inside The Dawn of Codex-native Apps.

| If the task … | Build for this mode |

|---|---|

| Has clear rules, repeatable steps, low stakes | Hand-off delegation - self-running agents |

| Requires taste, trade-offs, or rapid iteration | Stay-close pairing - shared docs, tracked changes, visible logs |

Always write down the spec first; Fortune 500 firms are paying millions for guideline documents that their teams could have produced in an afternoon. The safest heuristic: if you cannot audit and revert an agent's work, you are not ready to delegate.

What new "meta-skill" must every user learn?

The emerging meta-skill is knowing which mode fits a task and switching on the fly. Industry reports suggest this is becoming a critical workplace capability alongside creativity and resilience. Workers who master this approach show significant performance improvements according to research studies. In short, success is less about prompting and more about choosing the right AI interaction pattern.

Why do pure delegation projects fail so often?

Industry analysis reveals concerning patterns. Research indicates that many multi-agent LLM systems face significant challenges in production, with a substantial portion of those failures stemming from poor specification rather than technical bugs. Hierarchical delegation helps, yet introduces single-point-of-failure risks. The takeaway for product teams: start small, keep logs visible, and budget human review time as a first-class workflow step instead of an afterthought.

How are leading companies already operationalizing these modes?

- Leading tech companies are exploring ways to evaluate employees on "AI-driven impact," measuring how effectively they choose and review AI output.

- Procter & Gamble's experiment saw human-AI teams submit 50 % more ads per worker than human-only squads, and 3× more top-10 % breakthrough ideas.

- Industry research recommends a hybrid architecture: a supervisor agent decomposes work while peer agents collaborate beneath it - mirroring real management structures.

Across the board, collaboration beats delegation on quality, while delegation wins on scale. Leading products are beginning to give users explicit toggles between the two modes and surface action logs so humans stay in the driver's seat.