AI FinOps Tools Emerge to Track Escalating LLM Costs

Serge Bulaev

AI FinOps tools are being developed to help companies track and manage the rising costs of using large language models (LLMs). Reports suggest that many businesses are surprised by LLM expenses and want better ways to see and control these costs, similar to cloud services. Surveys show most AI startups now spend more on running models than training them, and almost all companies track their AI spending. New tools and dashboards may help companies forecast costs, spot savings, and link spending to business value, but there are still challenges like missed forecasts and gaps in AI governance. Early evidence suggests these tools might help reduce cost surprises and start important budget discussions sooner.

Specialized AI FinOps tools are emerging to track escalating LLM costs, moving from concept to funded roadmaps. Early adopters report significant cost surprises, driving demand from finance teams for the same detailed visibility they have with cloud and SaaS services.

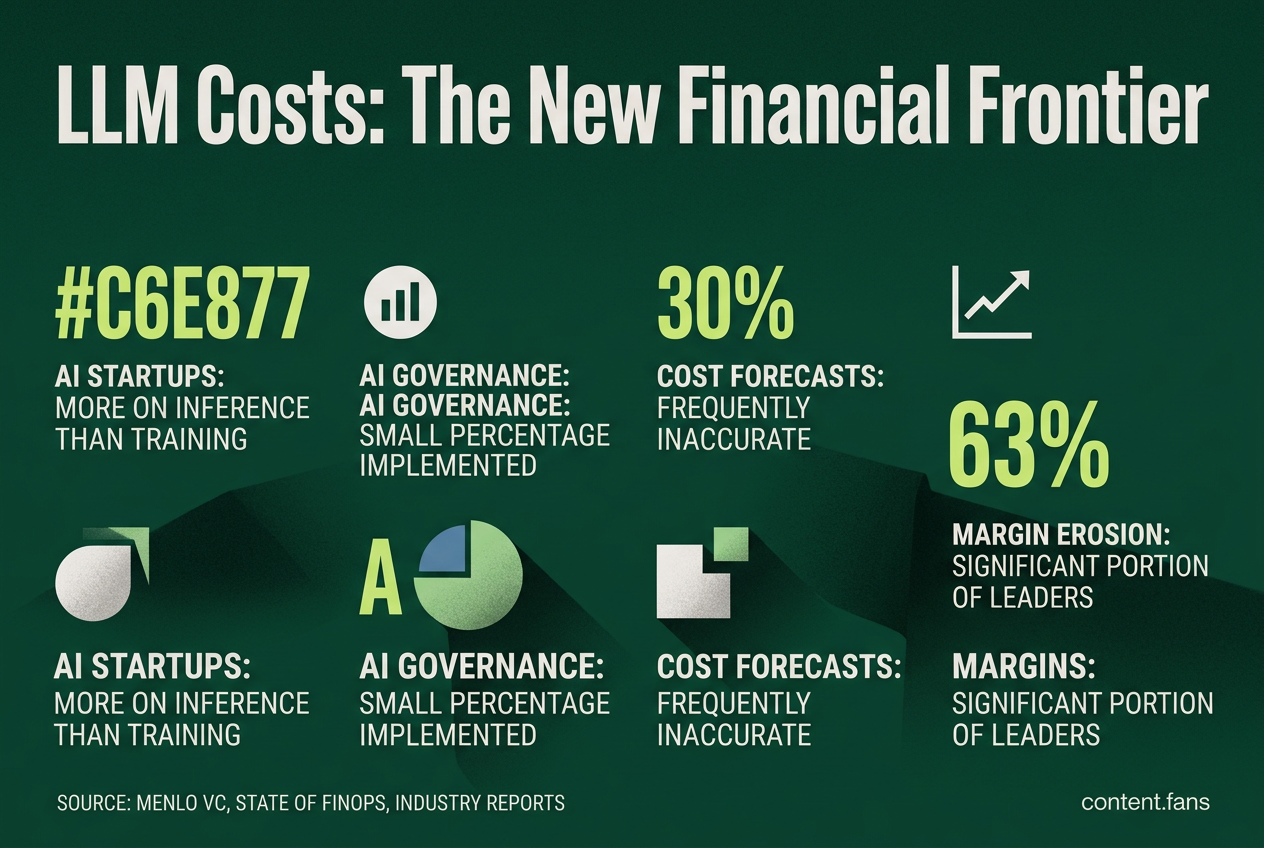

The need is urgent: research from Menlo VC indicates that a significant portion of AI startups now spend more on inference than training. Concurrently, the State of FinOps survey reveals that a growing number of companies now track AI expenditures, as noted in a USU summary. This data signals a fundamental shift toward managing tokens and GPU hours as primary financial assets.

This growing demand is giving rise to a new category of "token-finance" platforms, which focus on three core areas.

Why token visibility matters

Token visibility is critical because AI cost forecasts are frequently inaccurate, with many enterprises missing targets significantly. Without granular tracking, it's impossible to attribute spend, enforce budgets, or prevent margin erosion from uncontrolled LLM usage, leaving companies exposed to significant financial risk.

- Inaccurate Forecasts: Industry reports suggest that many enterprises miss their AI cost forecasts significantly. Common culprits include hidden API retries, lengthy context windows, and unmonitored use of premium models.

- Pervasive Governance Gaps: According to industry analysis, only a small percentage of organizations have fully implemented AI governance. This lack of attribution means budget caps are often reactive stopgaps instead of proactive controls.

- Eroding Profit Margins: Uncontrolled LLM usage is a direct threat to profitability. Industry reports suggest that a significant portion of business leaders are already seeing gross margin erosion due to unmanaged AI spending.

Emerging tooling landscape

| Vendor | Core method | Token-level features |

|---|---|---|

| Helicone | Proxy logger | Auto cost from counts, zero-code routing |

| Vantage | Cloud cost platform | Native OpenAI and Anthropic ingestion, alert rules |

| AI Vyuh FinOps | SDK interceptor | Per-feature and per-user attribution in real time |

| Langfuse | Open-source traces | Combines latency, quality, and cost per call |

Current tools generally fall into two categories. The first includes broad cloud cost platforms like Vantage, which ingest LLM provider bills alongside existing cloud invoices from AWS or Azure. The second consists of LLM-native proxies like Helicone, which prioritize developer experience and can be integrated with a single environment variable.

Building dashboards, leaderboards, ROI

- Collect Rich Metadata: Tag every API call with essential identifiers like team, feature, and business unit. Open-source gateways such as LiteLLM can expose headers to streamline this process.

- Allocate Costs to Unit Economics: Map token consumption directly to key business metrics, such as cost per active user or cost per dollar of revenue. Tools like Vantage and Pay-i offer lookup tables for model pricing to facilitate this.

- Foster Healthy Competition: Implement internal leaderboards that rank projects by cost efficiency and savings achieved through optimizations like prompt tuning. Some companies review performance across dozens of LLMs monthly.

- Connect Spend to Business Value: Integrate cost data with outcome metrics (e.g., CSAT improvements, ticket deflection) into a unified dashboard. The FinOps Foundation lists "quantify business value" as a top domain for AI financial management.

Implementation watch-outs

- Visualize Spending Patterns: Use heat-map visualizations to help finance teams identify anomalous cost spikes, such as those caused by uncontrolled agent recursion, which developers might otherwise miss.

- Balance Budgets Appropriately: Budgets are often heavily skewed toward experimentation, with industry reports noting that infrastructure and governance can receive a small portion of funding. It's crucial to adjust these ratios to prevent tooling from becoming a sunk cost without proper support.

While token dashboards are not a panacea, early evidence strongly suggests they significantly reduce cost forecast variance. More importantly, they empower teams to have critical ROI and budget conversations much earlier in the development lifecycle.

What exactly is AI FinOps and why is it suddenly a top-priority purchase?

AI FinOps applies traditional cloud cost-governance practices to AI workloads, but with token-level granularity. Instead of watching a single EC2 bill, teams now juggle dozens of foundation models, each priced per million tokens and each invoked by dozens of product features. A significant portion of AI-first startups already spend more on inference than on training, according to industry reports. Enterprise buyers want the same visibility to prevent runaway spend, so "token-finance" platforms that surface cost per prompt, per user, and per feature are moving from nice-to-have to must-have.

Which vendors already offer token-tracking heat-maps and ROI dashboards?

A crop of venture-backed startups have released working software:

- Helicone - open-source proxy that logs every LLM call and auto-converts token counts into dollars with zero code changes.

- Vantage - cloud-native cost platform that ingests OpenAI and Anthropic bills natively and treats them like any AWS line item.

- AI Vyuh FinOps - lightweight SDK that intercepts calls and attributes spend to features in real time.

- Langfuse & Datadog - trace every request for quality and cost on the same pane of glass.

All four already ship heat-maps, token leaderboards, and anomaly alerts; they integrate with SSO, billing, and BI tools so finance teams can build charge-back reports without engineering help.

What governance problems are enterprises trying to solve?

Many companies now miss their AI forecasts significantly, and a substantial portion of initiatives exceed budget. Without guardrails, product teams spin up premium models for low-value tasks, context windows balloon, and finance sees the spike weeks later. Prospective buyers want:

- Hard caps by model tier, team, or feature.

- Show-back dashboards that prove which prompts drive revenue.

- Policy engines that auto-route simple tasks to cheaper small models.

- Audit trails for regulators - industry reports suggest that many AI breaches stem from access failures.

FinOps tools that package these controls are increasingly being written into vendor-security questionnaires.

How mature is the market - niche utilities or the next HR/ERP category?

Industry leaders predict startups will pitch token-finance suites that sit alongside today's HR and ERP systems, and early customer data suggests growing adoption: AI-cost governance adoption has increased significantly according to industry reports. Analyst firms warn that, without such tooling, large firms could significantly underestimate AI infrastructure costs. The space is still fragmented, but rapid standardization around open telemetry and LLM-gateway specs suggests the category is on the same trajectory as cloud FinOps six years ago - only faster.

What should buyers evaluate before signing a contract?

Ask four practical questions:

- Coverage - does it meter every model you use (OpenAI, Anthropic, open-source on GPU) or just one API?

- Integration effort - proxy (Helicone), SDK (AI Vyuh), or billing-scrape (Vantage)? Pick the method that matches your release cadence.

- Unit economics - can it map cost to business KPI (cost per customer query, cost per closed deal)?

- Policy depth - will it block, throttle, or reroute calls in real time, or only alert after money is spent?

A platform that scores high on all four gives CFOs the confidence to scale AI without surprise invoices, and engineering the freedom to ship features without hand-built cost dashboards.