AI Expert Maps 6 Levers for Trustworthy AI Governance

Serge Bulaev

Martin Fjeldbonde suggests that trustworthy AI is mostly about how much authority is given to AI systems, not just their software quality. He describes six main controls, or levers - Sensors, Memory, Effectors, Autonomy, Coordination, and Embedding - that can be adjusted to match an AI's abilities with the right level of oversight. The scope of these levers appears to affect the risk and influence of AI in real-world tasks. Some experts believe that using a shared language for these controls may help organizations audit and manage AI more safely. There may still be challenges as AI tools get used quietly in everyday work, raising new questions about control and security.

Achieving trustworthy AI governance requires a fundamental shift in focus from software quality to managing delegated authority. Expert Martin Fjeldbonde argues the key is controlling how far an AI system's decisions can travel. His cognitive light cone metaphor provides a framework for mapping this influence, sparking new discussions among policy and security teams on how to manage agentic AI safely within enterprise workflows.

The principle is straightforward yet rigorous: organizations must measure the scope of an AI agent's sensing, memory, and actions. These capabilities must then be calibrated to match the system's proven competence, with all outcomes continuously audited for accountability.

From Light Cones to Levers

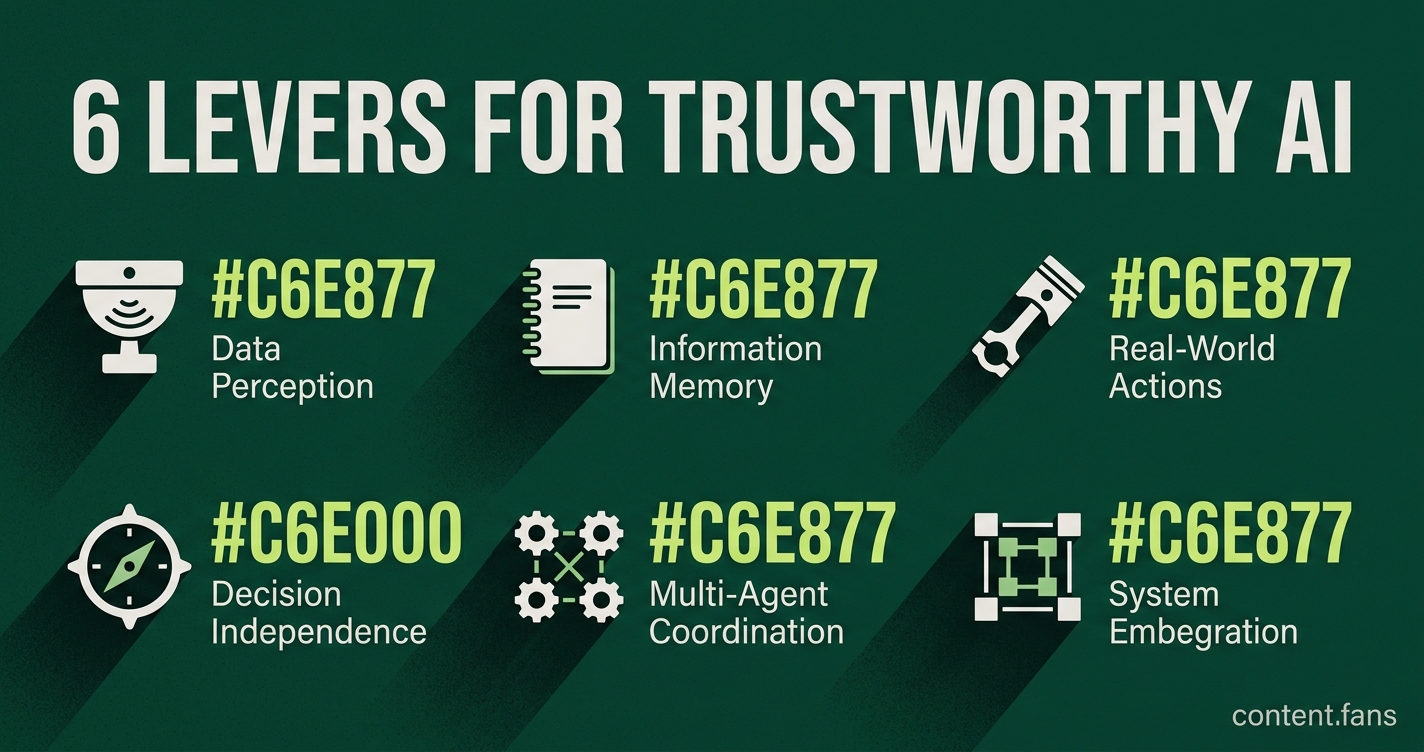

This framework outlines six specific governance levers that define an AI's delegated authority: Sensors (data access), Memory (retention), Effectors (actions), Autonomy (independence), Coordination (multi-agent ability), and Embedding (system integration). Adjusting these levers allows organizations to manage AI risk by right-sizing its operational scope.

Drawing inspiration from biologist Michael Levin's work on cognition, Fjeldbonde details these controls in his essay, A Biological Framework for Trustworthy AI (LinkedIn Pulse). The six levers provide a concrete vocabulary for defining delegated agency:

- Sensors: The data and signals the agent can perceive.

- Memory: The information it can store, retain, and recall.

- Effectors: The real-world or digital actions it can execute via APIs.

- Autonomy: The degree of independence it has in making decisions or setting goals.

- Coordination: The ability to orchestrate or collaborate with other agents and humans.

- Embedding: How deeply the agent is integrated within larger organizational systems and workflows.

Each of these levers can be adjusted, monitored, and logged, providing a practical toolkit for designers and security teams. This process of aligning an AI's reach with appropriate oversight is what Fjeldbonde terms "right-sizing the light cone."

Risk Rises With Reach

The potential for risk grows exponentially as an AI's operational reach expands through these levers, particularly when authority is delegated implicitly.

Quiet Delegation Inside Everyday Tools

Organizations often find that significant authority is delegated silently through AI assistants embedded in everyday tools like email, documents, and source-control systems. For example, a tool like Anthropic's Claude Cowork preview may gain the ability to read local files, execute code, or automate browser actions without explicit governance. This creates vulnerabilities, as security researchers have demonstrated, where threats like indirect prompt injection can exfiltrate sensitive data using the tool's own API keys, bypassing traditional audit logs.

In response, governance teams are implementing practical controls, many of which are already standard in corporate security policies:

- Explicit Approvals: Mandating dual sign-off or explicit human approval for high-risk actions (Effectors).

- Reversibility: Ensuring all changes are reversible through robust version control and rollback mechanisms.

- Segregation of Duties: Designing systems so no single agent possesses maximum capabilities across all six levers.

- Traceability: Maintaining tamper-evident logs that cover both model interactions and all resulting downstream actions.

- Data Retention Policies: Implementing strict schedules for the retention and deletion of data within agent memory layers.

A Converging Vocabulary

Ultimately, whether the concept is framed as "light-cone resizing," setting capability thresholds, or defining dimensions of legitimacy, the core principle is consistent: delegated AI agency must be proportionate and accountable. Adopting a shared, practical vocabulary built around the six levers - Sensors, Memory, Effectors, Autonomy, Coordination, and Embedding - is a critical step for organizations to standardize audits, manage risk, and accelerate the safe adoption of advanced AI.