AI attack tools surge: 70 open-source options now available

Serge Bulaev

AI-powered attack tools are growing fast, with about 70 open-source options now available. Security experts warn that attackers may be using AI to find and exploit vulnerabilities more quickly, while defenders are trying to keep up. Recent reports suggest that AI agents can be both targets and tools for hackers, and that some systems may be easy to take over if not properly secured. Defensive AI can find some bugs faster than people, but still misses tricky problems, so human experts are still needed. Experts suggest that organizations should be careful with AI tools, use strong authentication, and watch for suspicious activity, as the risks may continue to increase.

The surge of powerful AI attack tools is accelerating automated hacking and vulnerability discovery, with security firms warning that the digital threat surface is expanding in both volume and complexity. Researchers report a dual shift: agentic AI frameworks are now both the target of attacks and the weapon, forcing defensive teams into a race to keep pace.

This emerging ecosystem of AI agents, models, and marketplaces functions like a classic software supply chain, according to analysts. The trend signals a fundamental change in attacker strategy, as malicious actors integrate AI into every phase of their campaigns, from discovery and exploitation to persistence.

Offensive automation emerges in the open

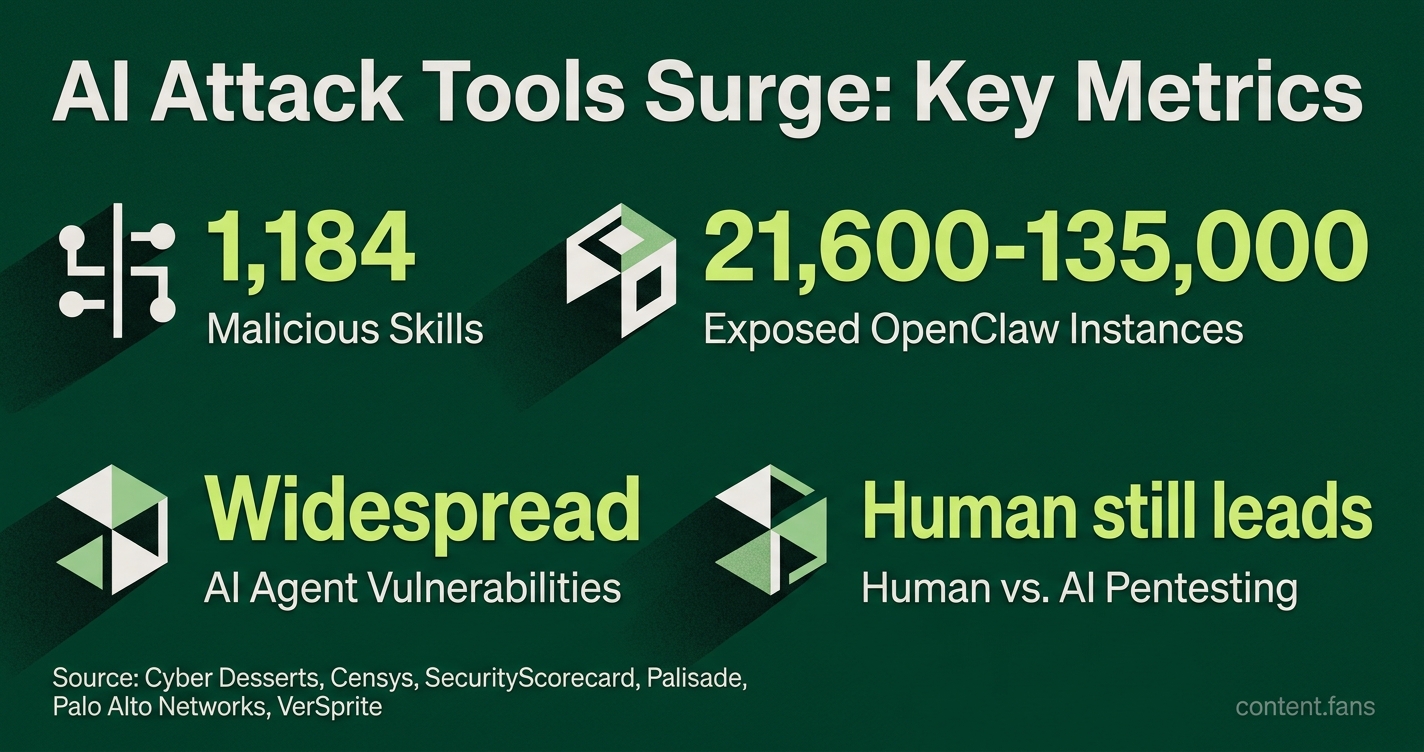

Cyber Desserts research has catalogued 1,184 malicious skills on ClawHub, the primary registry for the OpenClaw agent framework, connecting most to the ClawHavoc campaign (Cyber Desserts). Scans from Censys and SecurityScorecard estimate between 21,600 and 135,000 OpenClaw instances are publicly exposed, many lacking authentication and offering attackers easy remote code execution (RCE) footholds.

AI-powered offensive security tools are specialized software, frequently open-source, designed to automate hacking tasks. They leverage artificial intelligence to discover system vulnerabilities, generate malicious code, and chain exploits together. These tools significantly accelerate attack timelines, enabling threat actors to operate at a previously unattainable scale.

Industry reports indicate significant vulnerabilities in AI agent frameworks, with bugs that allow crafted links to trigger tool invocation within milliseconds. This, combined with widespread prompt-injection findings, suggests that hijacking some agent deployments may only require simple user interaction.

Further research from Palisade demonstrated experimental AI agents capable of autonomously replicating across a network. Observers note that small, efficient models simplify this process, allowing them to run on low-spec servers and edge devices.

Defensive AI races to close the gap

While offensive AI tools proliferate, defensive models are also evolving rapidly. Engineers at Palo Alto Networks found that in head-to-head trials, their top agentic configuration discovered vulnerabilities faster than human testers in many cases.

However, human expertise remains critical. VerSprite's ARTEMIS study showed the top human pentester still surpassed the leading AI system in creative exploit chaining, highlighting gaps in AI's ability to handle complex business logic. Industry reports indicate a significant increase in open source AI penetration testing tools in circulation, representing a massive jump from earlier years. Despite this growth, practitioners caution that these tools often misclassify flaws, requiring manual triage.

Key observations so far

- Blended Risk: Malicious marketplace uploads and unauthenticated agent endpoints create a combined supply-chain and exposure threat.

- Dominant Vulnerabilities: Remote code execution (RCE), prompt injection, and command injection are among the most common vulnerabilities for agentic AI products according to security researchers.

- AI vs. Human Strengths: Defensive AI excels at speed and scale, but human experts are essential for discovering nuanced, multi-step exploits.

- Cost-Benefit Analysis: While custom AI agents can operate at significantly lower costs compared to human consultants, this excludes the significant overhead required for tuning and managing false positives.

Why the landscape matters now

The pressure to patch vulnerabilities is intensifying. Google's Threat Intelligence Group recently used AI to discover a zero-day that bypassed two-factor authentication, notifying the vendor in time for a fix before widespread exploitation occurred. Similarly, Anthropic's Mythos model identified a low-severity bug in the curl source code after scanning 178,000 lines. These successes demonstrate that defender tooling is advancing alongside attacker automation.

Experts agree that governing agent marketplaces is the next critical challenge. Researchers from Sangfor identified multiple CVEs in OpenClaw skills, including SSRF and path traversal flaws. The primary recommendation is to treat every AI skill as untrusted third-party code. Until stronger vetting processes are in place, organizations must isolate agent workloads, enforce strict authentication on all management interfaces, and monitor outbound traffic for signs of data exfiltration by rogue skills.

What exactly are the new AI attack tools and how are they being used?

Industry reports indicate a significant number of open-source offensive security tools have emerged in recent years, representing a substantial increase from earlier periods.

The most visible example is OpenClaw, an AI-agent framework whose public marketplace, ClawHub, already hosts more than 10 700 third-party skills. In recent years security teams identified 1 184 malicious skills inside that ecosystem, turning the platform into a supply-chain attack vector rather than a simple productivity suite.

Attackers use these tools to:

- Automate vulnerability discovery on large networks

- Generate convincing phishing lures and malicious code snippets

- Chain low-risk bugs into higher-impact exploits without human oversight

Google's Threat Intelligence Group recently confirmed AI-assisted exploitation of a zero-day that could bypass two-factor authentication if the attacker already held valid credentials. The vendor patched after Google's alert, but the incident shows AI is shortening the attack timeline.

How big is the OpenClaw exposure and what risks does it create?

More than 135 000 OpenClaw instances are reachable from the internet across 82 countries, according to scanning data from multiple security firms. Many deployments lack authentication, letting attackers:

- Drop malicious skills that run commands or exfiltrate tokens

- Abuse Server-Side Request Forgery (SSRF) and path-traversal bugs to reach internal APIs

- Trigger one-click Remote Code Execution via poisoned configuration files

The ClawHavoc campaign alone smuggled 824 malicious skills into ClawHub, representing a significant portion of packages at peak. Because skills install with the same privileges as the agent, a single tainted package can grant persistent cloud access without raising traditional malware flags.

Are defensive AI models really outperforming human testers?

Yes - in specific, narrow tests. In recent studies on large enterprise networks:

- Custom AI agents have demonstrated significant vulnerability discovery capabilities with high precision rates

- They have outperformed many certified human testers in certain scenarios

- However, top human experts still consistently find more complex issues, beating automated systems

Cost adds to the appeal: running AI systems costs significantly less than human consultants. The trade-off is that humans remain superior at creative exploit chaining, business-logic flaws and GUI-centric workflows, so most enterprises now run hybrid programs - AI for breadth and speed, people for depth and validation.

Which defensive models are gaining traction and what results do they deliver?

Anthropic Mythos and OpenAI GPT-5.5-Cyber lead the pack. Mythos recently scanned 178 000 lines of code in the ubiquitous curl library and flagged a confirmed low-severity vulnerability, something that would take a human reviewer days of focused work. Security-focused AI is also embedded in platforms such as XBOW, NodeZero and Pentera, which:

- Discover attack paths automatically

- Prove exploitability without human scripting

- Re-check patches on demand

Enterprises adopt these tools mainly for continuous validation, not as a one-off penetration-test replacement.

What should organizations do right now to stay ahead of both AI-powered attacks and AI-driven defenses?

Treat AI agent marketplaces like any third-party supply chain:

- Inventory every AI skill, plugin or connector before deployment

- Block internet-facing management ports or enforce strong authentication

- Segment agent runtimes from sensitive production networks

- Monitor for unexpected tool calls and token exfiltration

- Blend AI and human testing: run autonomous scanners regularly and conduct manual red-team exercises periodically to catch subtle, context-specific flaws

The key takeaway: offensive and defensive AI are scaling rapidly. Teams that integrate both - automated tools for speed, expert oversight for sense-checking - gain the widest coverage while keeping business risk tolerable.