Harvard study finds OpenAI's o1-preview outperforms doctors in ER diagnoses

Serge Bulaev

A Harvard and Beth Israel study suggests OpenAI's o1-preview language model may list correct or very close emergency-room diagnoses more often than doctors, especially when information is limited. The model seems to work best in situations with the most uncertainty, but experts warn that being good at tests does not mean it is ready for real patient care. Researchers say more trials and stricter safety rules are needed before using it in hospitals. Some studies also show risks if these systems are used without enough oversight. Future research will need to see how well this tool actually helps patients and doctors in real situations.

A landmark Harvard study suggests OpenAI's o1-preview can outperform doctors in ER diagnoses, particularly when initial information is limited. Researchers from Harvard and Beth Israel Deaconess Medical Center found the large language model (LLM) provided a more accurate differential diagnosis than attending physicians in a retrospective analysis of 76 emergency room cases. Authors caution that the AI is not ready for clinical use without further prospective trials and robust safety protocols.

How o1-preview compares on key benchmarks

In a study using clinical vignettes, OpenAI's o1-preview achieved strong diagnostic accuracy rates, surpassing both human doctors and its predecessor, GPT-4. The model performed best in early triage scenarios with limited data, suggesting its potential as a powerful diagnostic aid in uncertain situations.

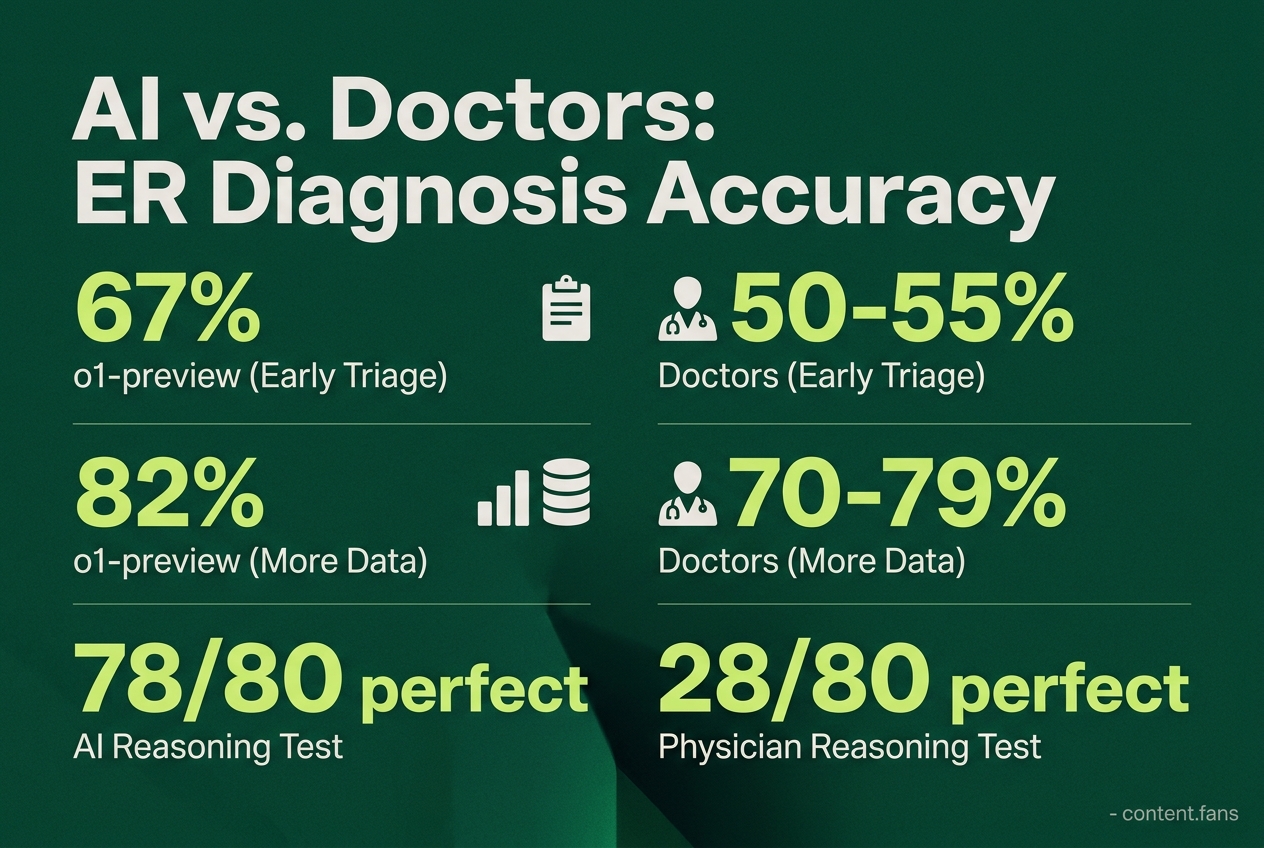

According to the study's preprint, o1-preview demonstrated superior accuracy on diagnostic vignettes compared to GPT-4 (arXiv PDF). On a separate 80-item reasoning test, the AI demonstrated superior performance, scoring perfectly on 78 items, whereas experienced physicians scored perfectly on 28 and residents on 16 (AIbase article).

The model's advantage was most pronounced during early triage. With limited patient information, o1-preview's diagnostic accuracy reached 67%, significantly higher than the 50-55% achieved by human doctors. As more data became available, the accuracy gap narrowed, with the AI reaching 82% and clinicians hitting 70-79%, a difference deemed not statistically significant (Gigazine report). This highlights its potential value when diagnostic uncertainty is at its peak.

Clinical trials and safety signals

The study's authors emphasize that these benchmark victories do not translate to immediate clinical readiness and call for rigorous real-world evaluation. Other research into decision-support AI in emergency settings shows both promise and potential pitfalls:

- Large-scale observational studies of AI triage tools have demonstrated improvements in correct high-acuity assignments and reductions in door-to-care times across many patient visits.

Conversely, a study from Mount Sinai revealed a significant risk: a consumer health chatbot incorrectly under-triaged over half of urgent cases, highlighting the dangers of unsupervised AI. To mitigate these risks, experts stress the need for clinician oversight, localized validation, and continuous performance monitoring as these models are integrated into clinical workflows.

Best-practice themes for second-opinion LLMs

Expert guidance strongly advocates for a "machine-in-the-loop" framework where physicians remain the ultimate decision-makers. Essential safeguards include HIPAA-compliant electronic health record (EHR) integration, comprehensive audit trails, demographic bias testing, and clear escalation protocols for high-risk cases. However, systematic reviews caution that evidence for improved efficiency is highly context-dependent and that benefits to diagnostic quality are not guaranteed in all settings.

Early case studies showcase tiered alert systems that allow clinicians to accept, modify, or dismiss AI suggestions. This approach helps manage the cognitive load of supervising the AI while aiming to balance safety with potential benefits, with several pilot programs reporting meaningful reductions in diagnostic errors.

What comes next

While o1-preview's superior performance in benchmarks signifies rapid advancement in AI capabilities, researchers stress the significant gap between retrospective studies and live patient care. Before widespread adoption, future controlled trials must rigorously assess critical factors including patient outcomes, workflow integration, and medical liability. Only after these elements are thoroughly understood can health systems responsibly move beyond the pilot phase.