FINRA 2026 Mandates AI Agent Traceability for Financial Firms

Serge Bulaev

FINRA's 2026 rules say that financial firms using AI agents must be able to trace and prove what those agents do, not just promise they are following the rules. Firms may need to keep detailed logs of each agent's actions, especially for high-risk uses like credit or fraud, and have humans approve sensitive decisions. There might be new requirements for how data is accessed and stored, with clear limits and records about data use. Regular monitoring and checks for errors or unexpected behavior are expected once agents are active. This suggests future audits may require live proof that the firm's controls and rules actually worked each time an agent made a decision.

FINRA's 2026 report flags AI agent traceability and auditability as governance concerns and says firms must comply with existing FINRA rules when using GenAI; it does not impose a separate 'AI agent traceability mandate.' Financial institutions using AI agents must provide regulators with concrete, evidence-based proof of their actions, not just promises of compliance. FINRA says its rules are technologically neutral and continue to apply to GenAI, with implications for supervision, communications, recordkeeping, and fair dealing. SR 11-7 is a separate model risk management guideline, not FINRA's own standard, as highlighted in recent client alerts.

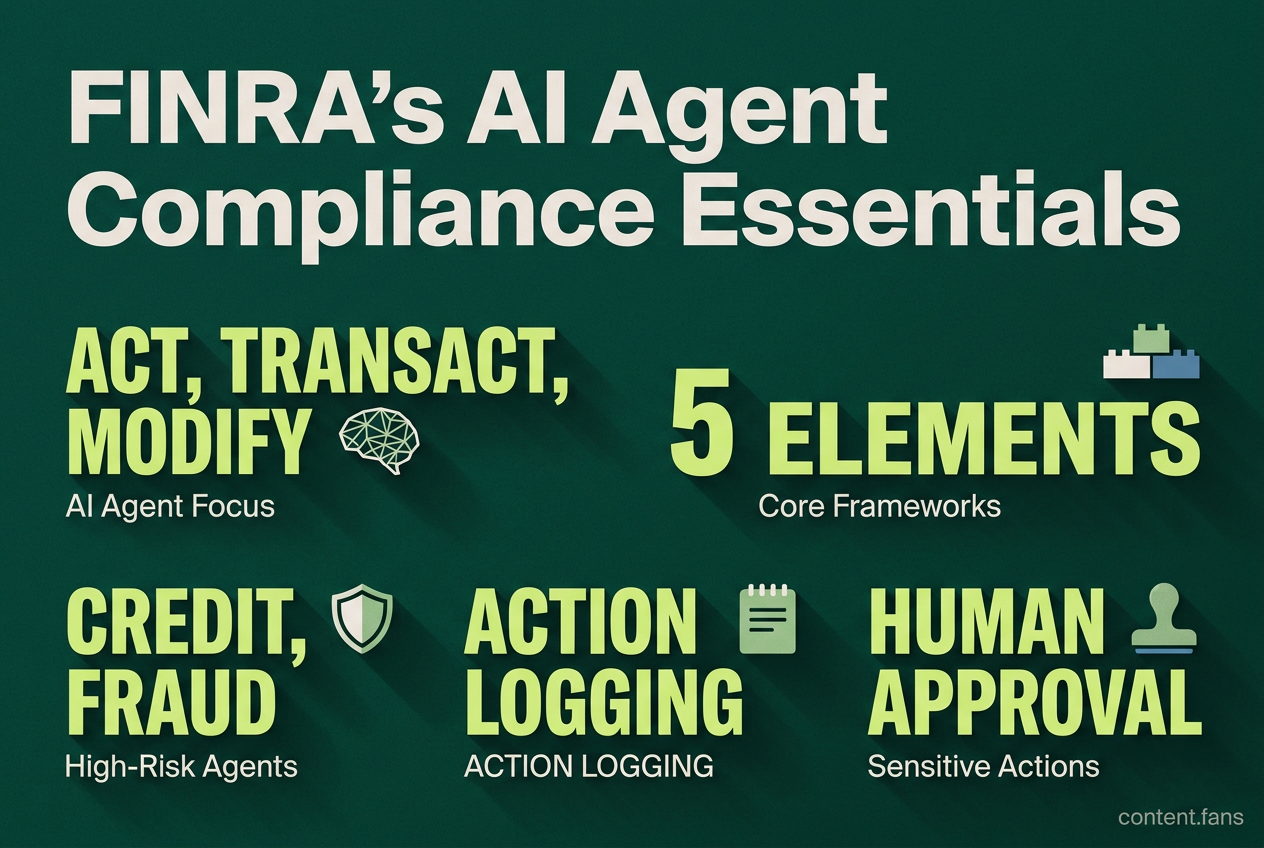

Regulators are now scrutinizing any AI agent with the capacity to act, transact, or modify records. This focus requires that governance frameworks extend beyond simple prompt reviews to encompass comprehensive action logging and granular permission controls.

Core framework elements

To comply, firms must establish a robust governance framework for their AI agents. This involves inventorying all agents by risk level, assigning clear ownership, validating models before deployment, logging all actions for traceability, and implementing human approval checkpoints for sensitive operations to ensure accountability and control.

- Agent Inventory and Risk Classification: Categorize every agent based on its use case risk (e.g., credit, fraud, customer service). High-risk agents, such as those for credit or fraud, fall under stringent regulations like the EU AI Act's high-risk tier.

- Establish Clear Ownership: Appoint specific owners from business, compliance, and technical teams for each agent. Regulators favor technology-neutral controls that align with existing supervisory standards.

- Pre-Deployment Validation: Rigorously test models in a sandbox environment before launch. These tests must assess for bias, vulnerabilities like prompt injection, and the potential for unsafe automated actions (tool calls).

- Comprehensive Action Logging: Maintain detailed logs of all prompts, outputs, tool usage, and agent actions. Each entry must be timestamped to meet traceability standards, such as those in the EU AI Act's Article 12.

- Human-in-the-Loop for Sensitive Actions: Implement mandatory human approval checkpoints for any sensitive agent actions. Using narrow permission sets and requiring explicit sign-offs significantly reduces a firm's exposure to enforcement actions.

Data minimization and privacy controls

Since AI agents often aggregate data from multiple sources, they can create challenges related to GDPR's Article 5 principles. Model or data drift can alter the lawful basis for data processing, potentially requiring new Data Protection Impact Assessments (DPIAs). Firms must be prepared to demonstrate:

- Field-level access controls that prevent agents from accessing non-essential data.

- Data retention policies that are strictly aligned with purpose-limitation principles.

- Audit logs detailing exactly which data fields were accessed, by which agent, and for what purpose.

- A clear map of any cross-border data transfers that occur during subprocessed tool calls.

Monitoring and drift detection

Post-deployment, continuous monitoring of AI agents is mandatory. Firms must use real-time dashboards to detect issues like data drift, unexpected agent behavior, or high rates of human overrides. For compliance teams evaluating tools, the ability to produce traceable prompt and response records is a critical feature.

Quick reference checklist

- Are all prompt, output, and action logs retained for the full statutory period?

- Is every high-risk decision gated by mandatory human approval?

- Can you fully reconstruct the decision-making process for any action taken by an agent?

- Is every data field accessed by an agent mapped to a lawful basis and a specific retention rule?

- Are all changes to models or prompts managed under a change control process that triggers re-validation?

Risk Tiers by Use Case

- Lower-Risk Use Cases: Includes internal knowledge assistants, policy summarization bots, and automated ticket routing.

- Higher-Risk Use Cases: Includes critical functions like credit underwriting, fraud disposition, KYC onboarding, and investment advice.

The principle is simple: the higher the risk, the more stringent the requirements for pre-deployment validation, narrow permissions, and detailed evidentiary logging.

This comprehensive framework not only addresses FINRA's guidance but also aligns with a host of other critical regulations simultaneously applicable in finance, including GLBA, PCI DSS, NYDFS Part 500, DORA, and GDPR. The clear trend indicates that future regulatory examinations will move beyond reviewing static policy documents, instead requiring firms to provide live, system-generated evidence that their controls were effective at every single decision point.

What exactly is FINRA requiring under its 2026 guidance?

FINRA's 2026 guidance pulls agents into the existing model-risk and supervisory regimes. Instead of a brand-new AI rule, regulators are expanding familiar expectations: prompt and output logging, version tracking, human check-points for high-risk actions, and least-privilege access for both human and non-human (service) accounts. In practice, if your agent touches anything regulated - from client onboarding to trade recommendations - you must be able to recreate the decision trail and show who or what approved every step.

Which use cases will face the toughest controls?

The higher the consequence, the heavier the governance. Credit decisions, fraud dispositioning, KYC onboarding and investment advice are all cited as "high-risk" by both the EU AI Act and FINRA supervisory letters. Lower-risk cases such as internal knowledge bots or ticket triage can often stay on lighter oversight, but even those still need basic inventory, logging and human override paths.

How long must logs and audit trails be retained?

Neither FINRA nor the EU AI Act fixes a single number, but the expectation is "retain long enough to satisfy supervisory examinations and model-risk reviews." Most firms are planning three to seven years, aligning with existing SEC and FINRA recordkeeping rules. Where data retention clashes with privacy regimes, segregated long-term storage (model outputs without PII, access logs hashed, etc.) is emerging as the compromise approach.

What counts as "human oversight" under current guidance?

A rubber-stamp approval is no longer enough. Regulators want evidence of real-time capacity to intervene:

- Human review or sign-off before an agent executes anything that affects a customer account

- Escalation time limits (many firms are setting reasonable timeframes for trading decisions)

- Override logs that capture who vetoed an agent and why

How should firms prepare right now?

- Inventory every agent - who owns it, what data it sees, what it can do.

- Map each workflow to regulatory risk level - high, medium, low.

- Patch logging gaps - many early pilots have partial or no traceability for tool calls.

- Draft human-in-the-loop playbooks - define red-flag thresholds and approval chains before launch.

- Align vendor contracts - insist on SLA clauses that guarantee log export and audit access within reasonable timeframes.