Agile Marketers Pivot to AI Governance as Non-Agile Teams Lag

Serge Bulaev

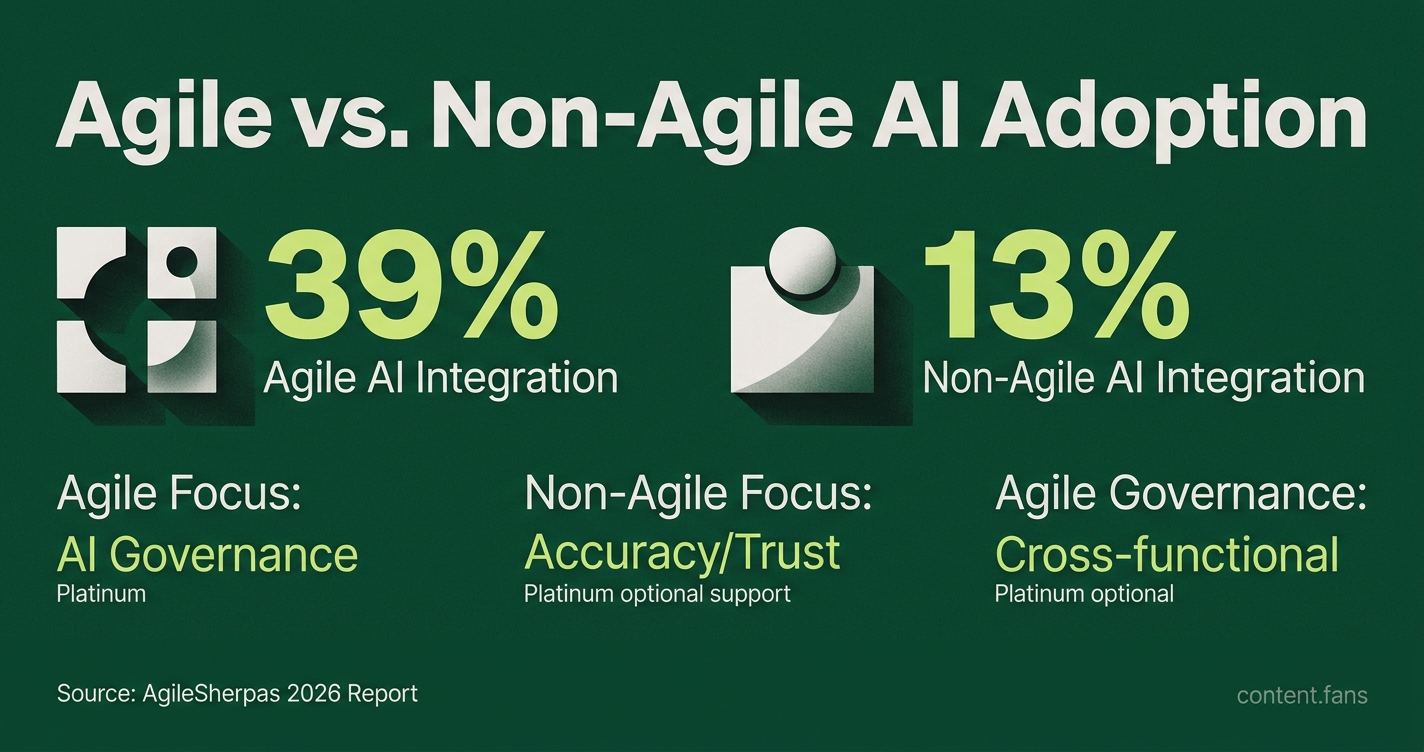

A recent survey suggests that agile marketing teams are much further along in using AI than non-agile teams, with 39 percent of agile groups fully integrating AI compared to only 13 percent of non-agile ones. Agile teams now face new challenges around AI governance and oversight, while non-agile teams still struggle with concerns about data quality and AI accuracy. The report notes that different problems require different solutions for each group. There appears to be a risk that teams who delay AI adoption due to trust issues may fall even further behind as AI practices and requirements advance.

While many marketing teams struggle with AI's basic adoption challenges, agile marketers are already pivoting to the next frontier: AI governance. The AgileSherpas 2026 State of Agile Marketing report highlights this widening gap, finding 39% of agile teams have fully integrated AI, compared to a mere 13% of their non-agile peers. As agile teams tackle policy and oversight, non-agile groups remain stalled by fundamental doubts about AI accuracy and data quality. This divergence creates distinct challenges requiring tailored strategies rather than universal solutions.

Agile teams pivot from adoption to governance

Having successfully used AI for tasks like campaign optimization and audience segmentation, agile marketing teams are now shifting focus. They are establishing structured AI governance to manage regulatory compliance, brand safety, and ethical risks without sacrificing the speed and flexibility inherent to agile workflows.

Effective AI governance blueprints for agile teams prioritize unified data definitions, cross-functional oversight, and automated monitoring. This approach allows teams to maintain sprint velocity while adhering to privacy and bias regulations. Modern platforms, like DataRobot's governance suite, integrate directly into existing ad tech stacks, offering essential features like version control, automated bias checks, and streamlined rollback workflows.

Industry best practices include:

- Establish an AI governance council with delegates from marketing (CMO), technology (CIO), finance, and legal to ensure holistic oversight.

- Implement a phased rollout, starting with checklist-based audits in early sprints and progressing to fully automated bias testing within a few cycles.

- Utilize real-time dashboards to monitor for anomalies in high-impact models, such as those driving programmatic bidding or customer propensity scores.

- Define clear autonomy boundaries for AI agents, embedding mandatory human approval triggers for high-stakes or high-spend decisions.

According to industry reports, while many enterprises have model-risk frameworks, a significant portion lack enforceable generative AI policies. This disparity underscores the urgent need to embed governance rules directly into marketing workflows, moving them from static presentations to active operational controls.

Non-agile teams wrestle with accuracy and trust

For non-agile teams, the primary barrier to scaling AI is a fundamental lack of trust, with many teams citing accuracy and quality concerns. This skepticism is typically rooted in persistent issues like fragmented data, a lack of model explainability, and inadequate team training. To overcome these hurdles, establishing a foundation of trust is paramount. As a marketer's guide from Data Axle emphasizes, this foundation starts with high-quality data; verified and complete customer records are essential for preventing AI hallucinations and off-target personalization.

A structured playbook can help hesitant leaders advance from evaluation to controlled pilots:

- Conduct thorough audits of all customer data to identify and correct for incompleteness and bias before any model training begins.

- Prioritize explainable AI (XAI) models that reveal which data features most influence outcomes, enabling marketers to make rapid, informed creative adjustments.

- Integrate disparate AI tools into a single, unified platform to ensure that AI-driven outputs reveal, rather than conceal, underlying broken processes, a key risk highlighted by SmartBrief.

- Implement comprehensive, company-wide training programs to enhance employees' editorial judgment and reduce the risks associated with unsanctioned "shadow AI" usage.

The need for rigorous measurement is clear. Industry research reveals that a significant portion of C-suite executives fail to benchmark their AI systems against hard metrics like accuracy, precision, and fairness. This over-reliance on vendor claims instead of empirical data perpetuates the cycle of distrust.

Measuring the widening integration gap

The performance gap between agile and non-agile teams is now quantifiable and significant. Research shows agile marketers are substantially more likely to report that AI frees up time for strategic work compared to their non-agile counterparts. This trend extends beyond marketing, with industry studies showing higher project success rates for agile initiatives and reports indicating that a growing number of agile software teams use AI assistants for daily tasks.

This data suggests a compounding advantage for organizations that have moved past initial adoption hurdles and are now focused on refining AI governance. Conversely, firms that remain hesitant due to trust and accuracy issues face a growing risk of being left behind, as robust data hygiene, model explainability, and performance benchmarks shift from being competitive advantages to fundamental requirements.