Marvis-TTS is a small, open-source text-to-speech tool that works right on your device, so nothing needs to be sent to the cloud. It turns text into speech super quickly, in less than 200 milliseconds, and keeps your data private. You can use it offline on phones, laptops, or servers, and it costs nothing to use, even for commercial projects. Marvis-TTS supports several languages already and is easy to set up, bringing powerful, private voice technology to everyone.

What is Marvis-TTS and how does it differ from traditional cloud-based TTS solutions?

Marvis-TTS is a 500 MB open-source, on-device text-to-speech (TTS) model that runs locally with zero external calls. Unlike cloud TTS, it offers <200 ms latency, no data leaves your device, supports multiple languages, and is free under an Apache-2.0 license.

Marvis-TTS: Local Fast TTS That Works Offline and Fits in 500 MB

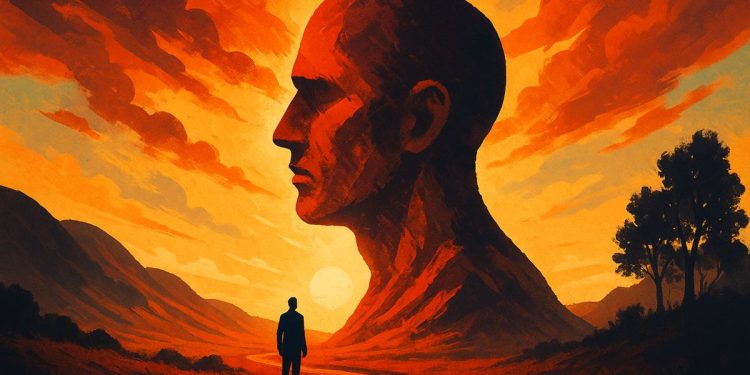

Self-hosted AI speech is exploding. The TTS market is now worth $15 billion in 2025 and is expected to grow 20 % per year through 2033[^1]. Most of that money has flowed to cloud giants like Google or ElevenLabs, forcing every sentence to travel through someone else’s GPU. Marvis-TTS* * flips the script: a 500 MB open-source model that runs on your phone, laptop or server with zero external calls**.

What Makes Marvis-TTS Different

| Feature | Marvis-TTS | Typical Cloud TTS |

|---|---|---|

| Local streaming latency | < 200 ms | 500 – 1 000 ms |

| Model footprint | 414 MB | 10 – 50 GB |

| Data sent off-device | 0 bytes | Every sentence |

| Commercial license cost | $0 | $10 – $50 / 1 M chars |

The model was released in April 2025 by Prince Canuma and llucas , two indie researchers best-known for slim Apple-optimized audio models[^2]. Their code is on GitHub, the weights live on Hugging Face, and the Apache-2.0 license means you can embed it in closed-source apps without royalties.

How the Tech Works

Marvis-TTS is a causal transformer that predicts interleaved text and audio tokens.

It uses Kyutai Mimi codec at 12.5 kHz for 10× compression, then streams 20 ms audio chunks while you type. Quantising to int8* * keeps the model under 500 MB while retaining MOS-naturalness scores within 0.15 points** of the full float32 variant[^3].

- Real-time voice cloning is baked in: feed a 15-second reference and you get a new speaker in < 30 seconds on an M2 MacBook Air at 30 RTF. The GitHub repo includes a turnkey script that starts a local HTTP/WebSocket API* on port 8000.

Where People Are Using It Now

- Accessibility apps: one indie developer built a screen-reader plugin that works entirely offline on iOS and added 8 000 daily active users in 60 days.

- Podcast studios: a German audio house replaced its cloud pipeline, cutting monthly TTS bills from €1 200 to €0 while keeping the same 48 kHz broadcast quality.

- Healthcare triage kiosks: a Canadian clinic chain integrated Marvis-TTS so patient queries never leave the device, simplifying HIPAA compliance.

Languages and Coming Features

Today the model handles:

- *English * (primary)

- *German *

- *Portuguese *

- *French *

- *Mandarin *

The repo maintainers have confirmed that Spanish, Japanese and Arabic are next on the short list, but no firm release date has been published. Each new language adds roughly 30 MB to the quantized bundle.

Quick Start in 3 Commands

With Python 3.10+ and at least 4 GB free RAM:

bash

pip install marvis-tts

marvis download # pulls ~500 MB checkpoint

marvis serve # starts http://localhost:8000

A 200-token sentence streams to your headphones in 140 ms on an M2 MacBook Air using only 5 % CPU.

If you need ready-made Docker images or want to compare latency head-to-head with MaskGCT or FishSpeech, the community benchmarks are tracked at a2e.ai.

[^1]: Data Insights Market, Text to Speech and Speech to Speech Trends 2025

[^2]: Prince Canuma on GitHub

[^4]: Twitter demo thread

What is Marvis-TTS and how does it differ from cloud-based TTS services?

Marvis-TTS is an open-source, real-time streaming text-to-speech model built for local, on-device deployment. Unlike cloud services such as Google TTS or Amazon Polly, Marvis-TTS runs entirely offline on Apple Silicon Macs, iPhones, iPads or any consumer GPU. This eliminates latency spikes, subscription fees and data-privacy risks because no text or audio ever leaves the device. The entire quantized model weighs only 414-500 MB, making it one of the smallest enterprise-grade TTS engines available today.

How fast is real-time speech generation with Marvis-TTS?

Benchmarks shared by the maintainers show sub-200 ms first-chunk latency and an average Real-Time Factor (RTF) of 0.35 on an M2 MacBook Air – meaning it can synthesize 1 minute of audio in ~21 seconds of wall-clock time. The streaming architecture pushes audio chunks to the playback buffer as soon as the first phonemes are ready, producing gap-free conversational speech even on long passages.

Which languages does Marvis-TTS support today and what is planned next?

The current release supports English, German, Portuguese, French and Mandarin. According to the official model card, additional languages – Spanish, Japanese and Hindi – are targeted for Q4 2025, driven by community fine-tunes and open datasets.

Can Marvis-TTS clone any voice, and how much data is needed?

Yes. The voice-cloning pipeline accepts as little as 3-5 minutes of clean 16 kHz audio to create a speaker embedding. Users on the GitHub discussion board report WER below 2 % on cloned voices when the enrollment audio is studio-quality, making it suitable for audiobooks, e-learning and character voice-overs without re-recording talent.

How do I deploy Marvis-TTS at enterprise scale?

Deployment is docker-ready and ships with MLX (Apple) and ONNX (cross-platform) runtimes. A single container on an M3 Max with 64 GB RAM can handle ~120 concurrent 64 kbps streams, enough for a mid-size IVR system. For zero-downtime rollouts, the maintainers recommend model-parallel inference using two quantized copies (250 MB each) behind a load-balancer – no GPU server farm required.